The Caddy ingress image was hardcoded in the component manifest and had no update path shy of cluster recreate or manual kubectl patch. That forced woodburn to run an out-of-band ansible playbook to bump Caddy, and broke the "spec.yml is source of truth" model. Changes: - spec.yml: new `caddy-ingress-image` key (default `ghcr.io/laconicnetwork/caddy-ingress:latest`). - Deployment manifest: `strategy: Recreate` on the Caddy Deployment — required because the pod binds hostPort 80/443, which prevents any rolling update from completing (new pod hangs Pending forever waiting for old pod to release the ports). - install_ingress_for_kind: accepts caddy_image and templates the manifest before applying, same pattern as the existing acme-email templating. - update_caddy_ingress_image: patches the running Caddy Deployment when the spec image differs from the live image. No-op if they match. Returns True if a patch was applied so the caller can wait for the rollout. - deploy_k8s._setup_cluster: on cluster reuse (ingress already up), reconcile the running image against the spec. Installs path unchanged; only the "already running, maybe needs update" branch is new. Cluster-scoped caveat: caddy-system is shared by every deployment on the cluster, so the spec value in any one deployment rolls Caddy for all of them — last `deployment start` wins. Documented in deployment_patterns.md alongside the other cluster-scoped concerns (kind-mount-root, namespace ownership). Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com> |

||

|---|---|---|

| .github/workflows | ||

| .pebbles | ||

| docs | ||

| scripts | ||

| stack_orchestrator | ||

| tests | ||

| .gitignore | ||

| .pre-commit-config.yaml | ||

| AI-FRIENDLY-PLAN.md | ||

| CLAUDE.md | ||

| LICENSE | ||

| MANIFEST.in | ||

| README.md | ||

| STACK-CREATION-GUIDE.md | ||

| TODO.md | ||

| laconic-network-deployment.md | ||

| pyproject.toml | ||

| pyrightconfig.json | ||

| requirements.txt | ||

| setup.py | ||

| tox.ini | ||

| uv.lock | ||

README.md

Stack Orchestrator

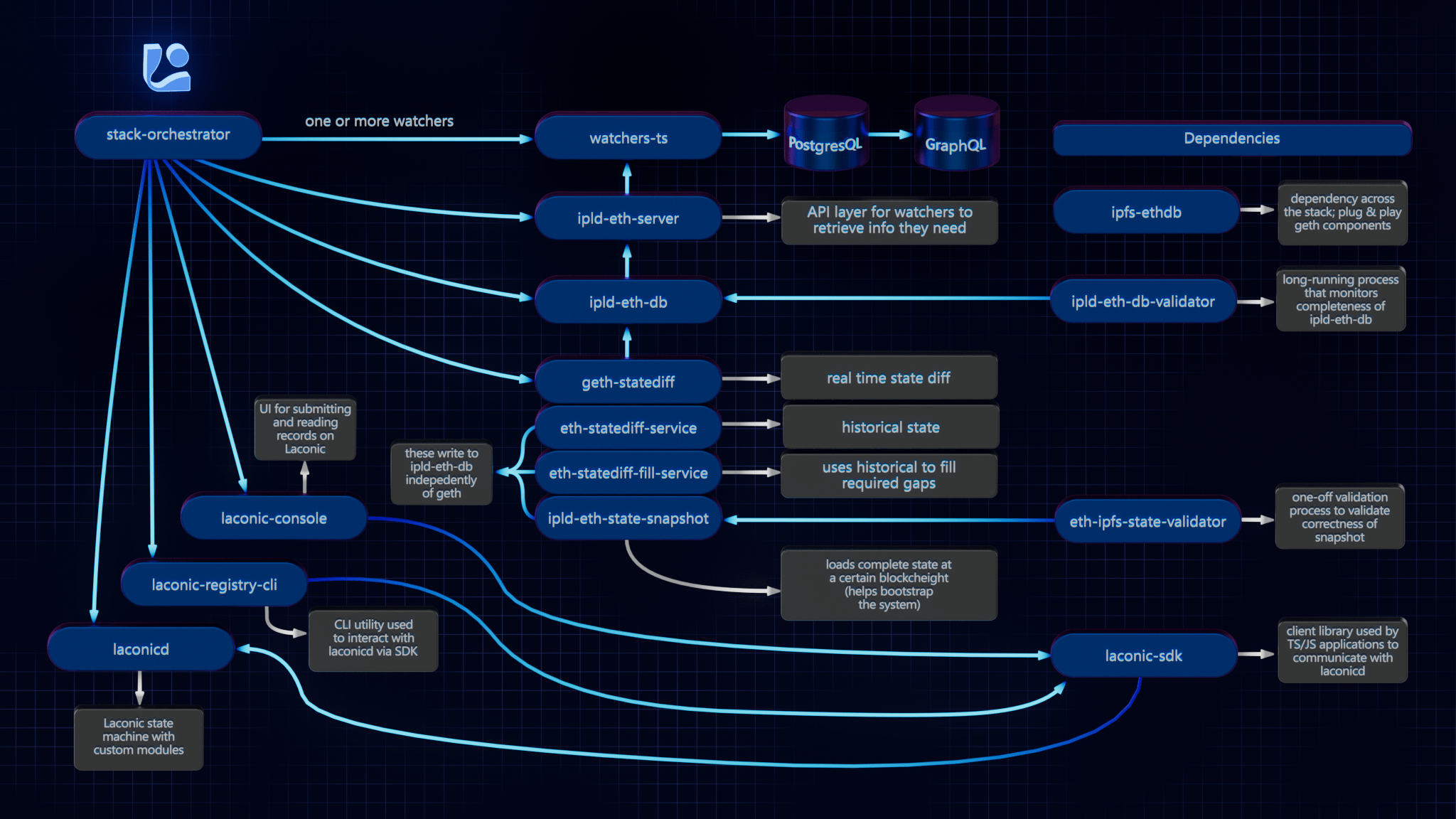

Stack Orchestrator allows building and deployment of a Laconic Stack on a single machine with minimial prerequisites. It is a Python3 CLI tool that runs on any OS with Python3 and Docker. The following diagram summarizes the relevant repositories in the Laconic Stack - and the relationship to Stack Orchestrator.

Install

To get started quickly on a fresh Ubuntu instance (e.g, Digital Ocean); try this script. WARNING: always review scripts prior to running them so that you know what is happening on your machine.

For any other installation, follow along below and adapt these instructions based on the specifics of your system.

Ensure that the following are already installed:

- Python3:

python3 --version>=3.8.10(the Python3 shipped in Ubuntu 20+ is good to go) - Docker:

docker --version>=20.10.21 - jq:

jq --version>=1.5 - git:

git --version>=2.10.3

Note: if installing docker-compose via package manager on Linux (as opposed to Docker Desktop), you must install the plugin, e.g. :

mkdir -p ~/.docker/cli-plugins

curl -SL https://github.com/docker/compose/releases/download/v2.11.2/docker-compose-linux-x86_64 -o ~/.docker/cli-plugins/docker-compose

chmod +x ~/.docker/cli-plugins/docker-compose

Next decide on a directory where you would like to put the stack-orchestrator program. Typically this would be

a "user" binary directory such as ~/bin or perhaps /usr/local/laconic or possibly just the current working directory.

Now, having selected that directory, download the latest release from this page into it (we're using ~/bin below for concreteness but edit to suit if you selected a different directory). Also be sure that the destination directory exists and is writable:

curl -L -o ~/bin/laconic-so https://github.com/cerc-io/stack-orchestrator/releases/latest/download/laconic-so

Give it execute permissions:

chmod +x ~/bin/laconic-so

Ensure laconic-so is on the PATH

Verify operation (your version will probably be different, just check here that you see some version output and not an error):

laconic-so version

Version: 1.1.0-7a607c2-202304260513

Save the distribution url to ~/.laconic-so/config.yml:

mkdir ~/.laconic-so

echo "distribution-url: https://github.com/cerc-io/stack-orchestrator/releases/latest/download/laconic-so" > ~/.laconic-so/config.yml

Update

If Stack Orchestrator was installed using the process described above, it is able to subsequently self-update to the current latest version by running:

laconic-so update

Usage

The various stacks each contain instructions for running different stacks based on your use case. For example:

Deployment Types

- compose: Docker Compose on local machine

- k8s: External Kubernetes cluster (requires kubeconfig)

- k8s-kind: Local Kubernetes via Kind - one cluster per host, shared by all deployments

External Stacks

Stacks can live in external git repositories. Required structure:

<repo>/

stack_orchestrator/data/

stacks/<stack-name>/stack.yml

compose/docker-compose-<pod-name>.yml

deployment/spec.yml

Deployment Commands

# Create deployment from spec

laconic-so --stack <path> deploy create --spec-file <spec.yml> --deployment-dir <dir>

# Start (creates cluster on first run)

laconic-so deployment --dir <dir> start

# GitOps restart (git pull + redeploy, preserves data)

laconic-so deployment --dir <dir> restart

# Stop

laconic-so deployment --dir <dir> stop

spec.yml Reference

stack: stack-name-or-path

deploy-to: k8s-kind

network:

http-proxy:

- host-name: app.example.com

routes:

- path: /

proxy-to: service-name:port

acme-email: admin@example.com

config:

ENV_VAR: value

SECRET_VAR: $generate:hex:32$ # Auto-generated, stored in K8s Secret

volumes:

volume-name:

Contributing

See the CONTRIBUTING.md for developer mode install.

Platform Support

Native aarm64 is not currently supported. x64 emulation on ARM64 macos should work (not yet tested).